Summary

Claude Code uses 4x more tokens than Codex on identical tasks. It also wins more benchmarks. That single fact explains almost every tradeoff between these tools: thoroughness costs tokens, and tokens cost money.

| Dimension | OpenAI Codex | Claude Code |

|---|---|---|

| SWE-bench Pro | 56.8% | 55.4% |

| SWE-bench Verified | N/A (different variant) | 80.8% |

| Terminal-Bench 2.0 | 77.3% | 65.4% |

| Speed (tok/s) | 1,000+ (Cerebras WSE-3) | ~200 (standard inference) |

| Token usage per task | 1x (baseline) | 3.2-4.2x more |

| Context window | 200K tokens | 1M tokens |

| Multi-agent model | Cloud sandbox per task | Agent Teams (coordinated) |

| $20/mo limits | 30-150 msgs/5hr | Standard (hits caps faster) |

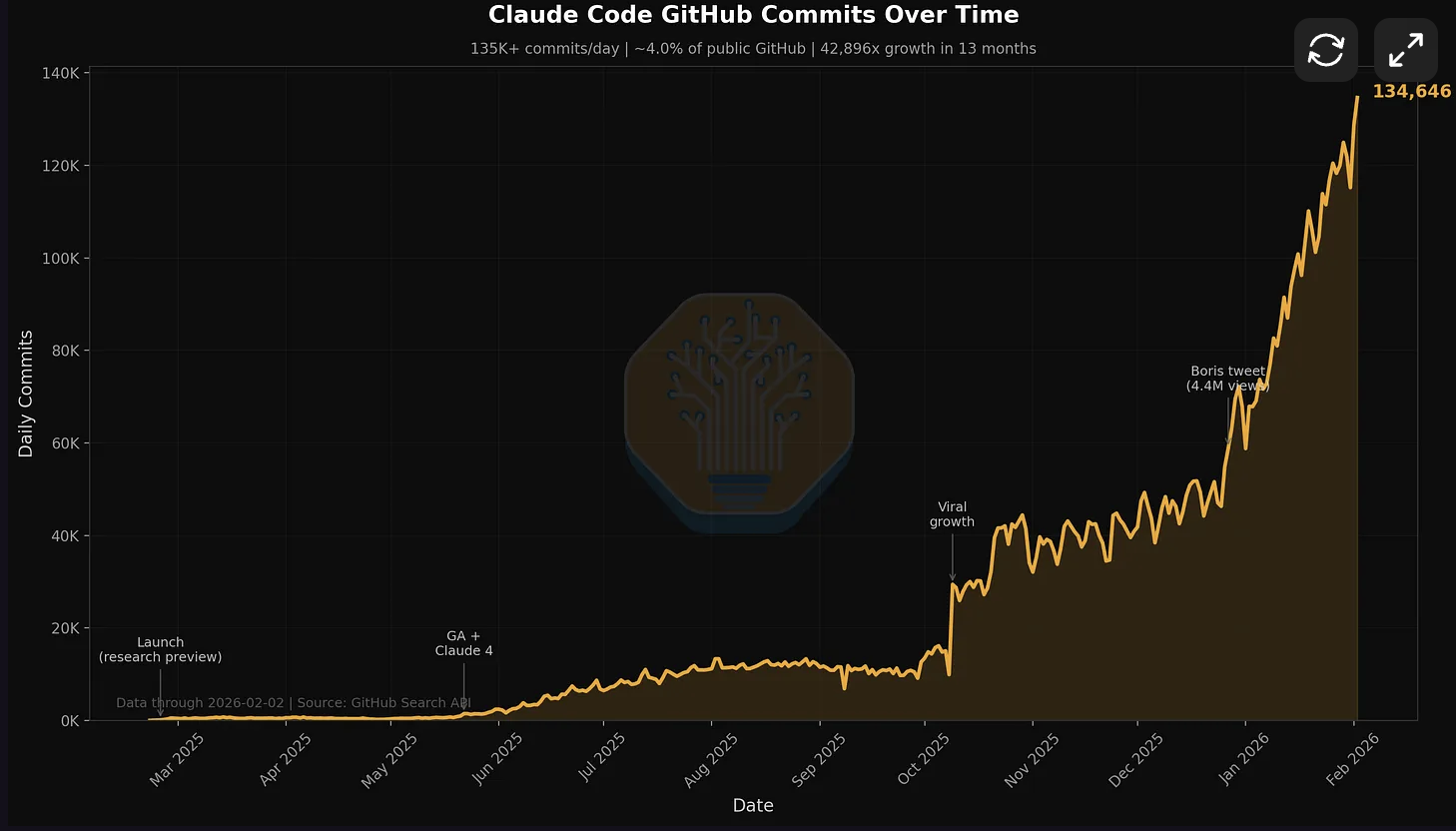

| GitHub commits/day | Not disclosed | 135K (~4% of all public) |

| Open source | Apache-2.0, 365 contributors | Proprietary CLI, 51 contributors |

SWE-bench Pro Accuracy

Stock vs with WarpGrep search subagent

Source: Morph internal benchmarks, SWE-bench Pro public set

Terminal-Bench 2.0 Score

Real-world terminal task completion

Source: Terminal-Bench 2.0, Feb 2026

Quick Decision Matrix

- Choose Codex if: You want cloud sandbox isolation per task, generous usage limits, or the new Codex macOS app for managing multiple agents

- Choose Claude Code if: You need coordinated agent teams with shared task lists, 1M token context, or deterministic multi-file refactoring

- Choose both if: You want Codex for speed + Claude's agent teams for orchestration

Benchmark Warning

Anthropic reports SWE-bench Verified (80.8%) while OpenAI reports SWE-bench Pro Public (56.8%). These are different benchmark variants with different problem sets. Direct score comparison across them is not valid. We use SWE-bench Pro and Terminal-Bench 2.0 as the only apples-to-apples comparisons available.

The Architectural Shift

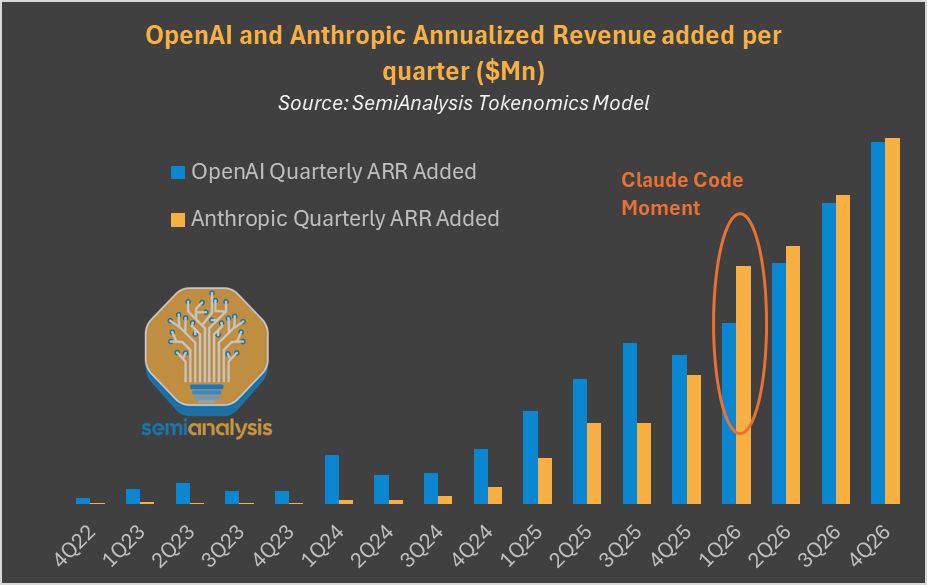

Both tools now support multi-agent workflows with dedicated context windows per subtask. This is the first lasting primitive in agent programming. Anthropic is valued at $380B with $14B ARR. OpenAI is pushing Codex onto non-Nvidia hardware. Both companies consider coding agents their primary growth vector.

Token Usage: Claude Code vs Codex on Identical Tasks

Claude uses 3-4x more tokens but produces more thorough output

Source: Independent benchmark by community testers, Feb 2026. Claude's higher token count correlates with more deterministic, thorough outputs.

Source: SemiAnalysis Tokenomics Model. Anthropic's quarterly ARR growth accelerated sharply at the "Claude Code Moment" in Q1 2026.

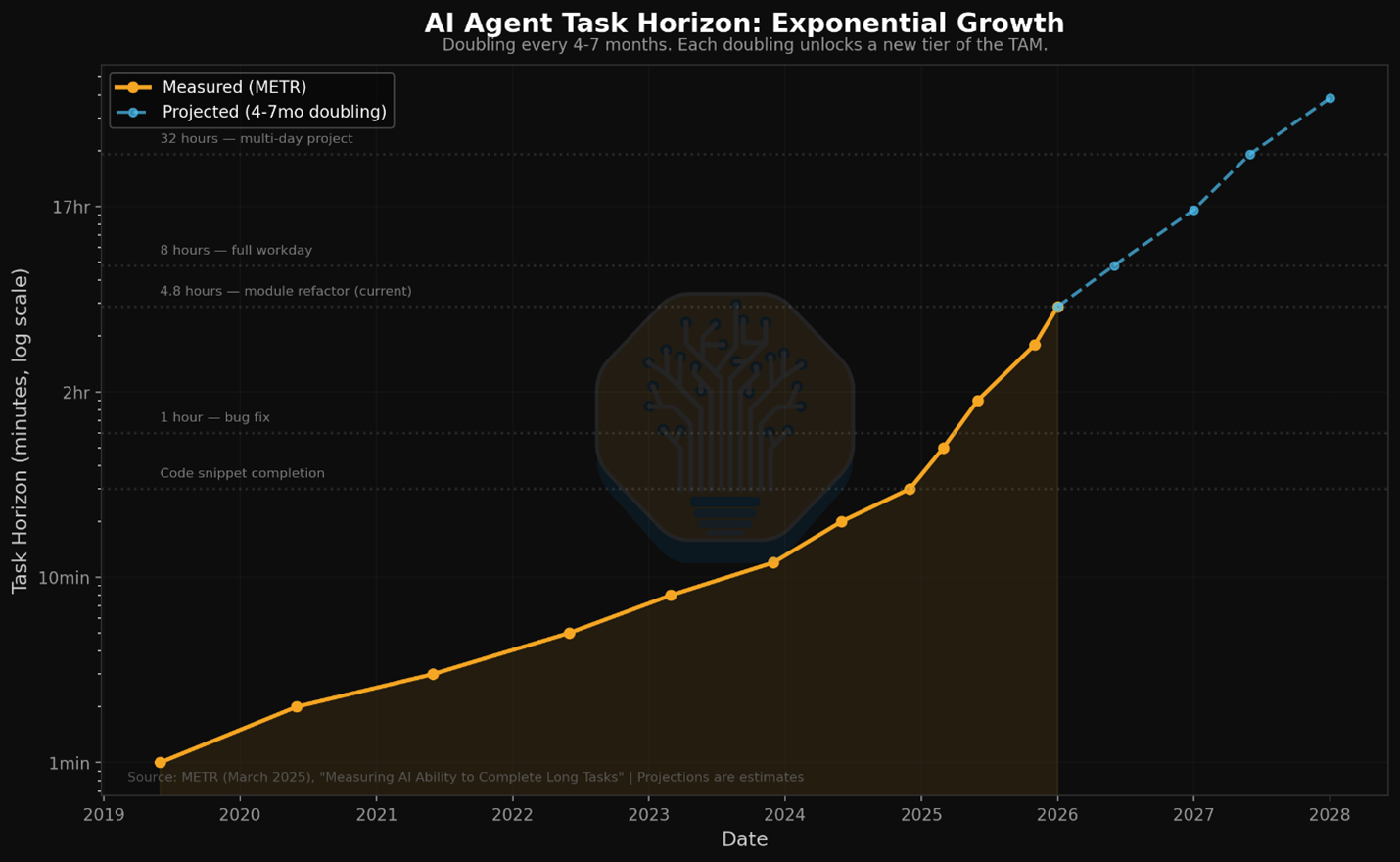

Source: METR / SemiAnalysis. AI agent task horizons are doubling every 4-7 months.

Stat Comparison

Synthetic benchmarks tell part of the story. These 5-bar ratings reflect daily workflow impact across speed, autonomy, consistency, subagent support, and limit generosity.

OpenAI Codex

The speed demon with cloud sandboxes

"Maximum velocity with isolated cloud sandboxes per task."

Claude Code

The orchestrator with agent teams

"Best-in-class subagent coordination, but you'll pay for it in limits."

GitHub and Marketplace Stats (Feb 28, 2026)

Claude Code

- 71,500 GitHub stars, 51 contributors

- v2.1.63 (Feb 28, 2026), ships multiple releases/day

- VS Code: 5.2M installs, 4.0/5 rating

- Agent SDK v0.2.49 (rebranded from "Code SDK")

- ~135K GitHub commits/day (~4% of all public commits)

OpenAI Codex

- 62,365 GitHub stars, 365 contributors

- v0.106.0 (Feb 26, 2026), 553 releases in 10 months (1.8/day avg)

- VS Code: 4.9M installs, 3.4/5 rating

- Rust-native CLI, zero-dependency install

- GPT-5.3-Codex-Spark: 1,000+ tok/sec on Cerebras WSE-3

Source: SemiAnalysis / GitHub Search API. Claude Code now accounts for ~4% of all public GitHub commits.

Reading the Stats

Codex optimizes for speed and autonomy at the cost of consistency. Claude Code optimizes for consistency and orchestration at the cost of limits. Neither dominates across all dimensions. But the VS Code rating gap is notable: Claude Code at 4.0/5 vs Codex at 3.4/5 suggests higher user satisfaction despite tighter limits.

Subagent Architecture: How Each Tool Isolates Context

Forget benchmarks for a moment. The most important shift in February 2026 is that both Codex and Claude Code now support multi-agent workflows. A dedicated context window per task is emerging as one of the first lasting primitives in agent programming,and these tools implement it in fundamentally different ways.

Why Subagents Matter

The single biggest limitation of AI coding agents is context window pollution. You ask the agent to refactor authentication, it reads 40 files, and by the time it gets to the last file it has forgotten the patterns from the first. Subagents solve this by giving each subtask its own dedicated context window. The auth refactor agent does not share context with the test-writing agent. Each one focuses.

| Aspect | Codex (Feb 2026) | Claude Code (Feb 2026) |

|---|---|---|

| Multi-agent model | Codex App: separate threads per project | Agent Teams: coordinated sub-agents |

| Isolation model | Cloud sandbox per task (container) | Git worktree per agent (local) |

| Task coordination | Independent threads, manual switching | Shared task list with dependency tracking |

| Agent communication | No inter-agent messaging | Direct messaging + broadcast |

| Context preservation | Isolated by default, no cross-pollination | Agents can read shared files, team config |

| Execution environment | Cloud (internet disabled for security) | Local machine (full access) |

Codex: Cloud Sandbox Isolation

Each Codex task runs in its own cloud container via the new macOS Codex App (Feb 2, 2026). Tasks are organized by project in separate threads. Fast, isolated, but agents cannot coordinate with each other. Think independent workers in separate rooms.

Claude Code: Agent Teams

Claude Code's Agent Teams (research preview) let you spawn sub-agents that share a task list with dependency tracking, send messages to each other, and work in parallel on git worktrees. Each agent gets a dedicated context window but they coordinate autonomously. Think a team in one office.

Dedicated Context Per Task

Both approaches validate the same insight: a dedicated context window per task is a lasting primitive. The question is whether you want isolated speed (Codex) or coordinated depth (Claude). For greenfield tasks that are independent of each other, Codex's isolation model wins. For complex refactors where subtasks have dependencies, Claude's coordinated teams win.

Claude Code: Agent Teams in Action

# Spawn a team for a complex feature

$ claude "Build the payment integration"

# Claude Code automatically:

# 1. Creates a team with task list

# 2. Spawns researcher agent → explores Stripe SDK patterns

# 3. Spawns implementer agent → writes the code (blocked until research done)

# 4. Spawns test-writer agent → writes tests in parallel

# Each agent has its own context window. No pollution.

# Agents message each other when done: "research complete, found 3 patterns"

# Task dependencies prevent implementer from starting before researcher finishesUsage Limits: What the Pricing Pages Leave Out

This is the section that will save you hundreds of dollars. The pricing pages don't tell you the real story about limits.

The $20 Tier Reality Check (Feb 2026)

ChatGPT Plus ($20/mo) gives 30-150 messages per 5-hour window with GPT-5.3-Codex. Claude Pro ($20/mo) still hits limits faster on equivalent workloads. Both now offer overflow at API rates, but the base allocation gap persists. OpenAI also added a $8/mo Go tier for light users.

| Tier | Codex (ChatGPT) | Claude Code | Key Difference |

|---|---|---|---|

| $8/month | ChatGPT Go (limited) | N/A | Entry tier for Codex only |

| $20/month | Plus: 30-150 msgs/5hr | Pro: standard limits | Codex gets more sessions |

| $100/month | N/A | Max 5x: 5x Pro usage | Claude-only mid-tier |

| $200/month | Pro: 300-1,500 msgs/5hr | Max 20x: 20x Pro usage | Both generous at this tier |

The Tier Structure in Feb 2026

OpenAI now offers three tiers: $8 (Go), $20 (Plus), and $200 (Pro). Anthropic offers three: $20 (Pro), $100 (Max 5x), and $200 (Max 20x). The $8 Go tier is new,useful for light Codex usage. Both platforms now let you buy additional credits at API rates when you hit limits, which reduces the sting of caps.

The real cost question in 2026 is not the subscription price,it's how many agent sessions you get. With subagent workflows, each agent team run burns through limits faster because you are running multiple context windows in parallel. Claude's Agent Teams are powerful but eat limits proportionally to the number of sub-agents spawned.

Token Economics Nobody Discusses

A data point that should concern Claude users: in identical benchmark tasks, Claude Code used 4x more tokens than Codex.

| Task | Codex Tokens | Claude Tokens | Ratio |

|---|---|---|---|

| Figma Plugin Build | 1,499,455 | 6,232,242 | 4.2x more |

| Scheduler App | 72,579 | 234,772 | 3.2x more |

| API Integration | ~180,000 | ~650,000 | 3.6x more |

Why Claude Uses More Tokens

Claude's higher token usage is not necessarily waste. It correlates with more thorough, deterministic outputs. Claude "thinks out loud" more, asks clarifying questions, and provides more detailed explanations. Whether this is valuable depends on your use case.

Claude Token Philosophy

More tokens = more context = more thorough. Claude prioritizes completeness over efficiency, which helps with complex refactoring but burns through limits faster.

Codex Token Philosophy

Fewer tokens = faster completion = lower cost. Codex prioritizes efficiency, which means faster results but potentially less thorough coverage of edge cases.

API Pricing Reality (Feb 2026)

If you are using the API directly (not the subscription), the pricing has shifted:

- Claude Opus 4.6 API: $5 input / $25 output per 1M tokens

- Claude Sonnet 4.6 API: $3 input / $15 output per 1M tokens

- GPT-5.3-Codex: pricing varies, generally lower per-token

The interesting play is Claude Sonnet 4.6. It scores 79.6% on SWE-bench Verified,only 1.2% behind Opus 4.6,at roughly half the price. For agent team workloads where you are spawning multiple sub-agents, using Sonnet 4.6 for worker agents and Opus 4.6 only for the lead agent can cut costs significantly.

The Configuration Tax: Setup Time Reality

Codex works surprisingly well out of the box. Claude Code unlocks its potential through configuration. This is not marketing. It is architectural.

Codex: Rebuilt in Rust, Zero Dependencies

- CLI rewritten from TypeScript to Rust in Feb 2026, zero-dependency install

- New Codex macOS app for multi-agent management

- Voice input via spacebar (v0.105.0): hold to record, transcribed via Wispr

- Diff-based forgetting: novel memory management where stale context is diffed away

- Plugin system in Codex App: MCP shortcuts, @mentions in review comments

- JetBrains, Xcode, GitHub Actions integrations now GA

- Configurable sandbox modes: workspace-write, read-only, danger-full-access

- Open-source (Apache-2.0) with 365 contributors

Claude Code: Configuration Is the Feature

- CLAUDE.md file for project-specific instructions

- Agent Teams for multi-agent orchestration (research preview)

- Auto-memory: Claude automatically saves project context across sessions

- Remote Control: scan QR code to control sessions from mobile (Claude Max only)

- Hooks system for custom automation (worktree, teammate, task events)

- MCP (Model Context Protocol) for tool integration

- Task management with dependency tracking between agents

- VS Code extension (5.2M installs) with inline diffs, @-mentions, plan review

Anthropic's Cowork desktop app for Claude Code. Folder-based project management with task routing. Source: SemiAnalysis.

Configuration Cost

Claude Code's configurability is both its superpower and its tax. You can build incredibly sophisticated workflows, but you'll spend hours doing it. One developer reported spending "most of my engineering time not on writing code... but on Claude Code configuration."

Claude Code: CLAUDE.md Example

# CLAUDE.md - Project-specific instructions

## Code Style

- Use TypeScript strict mode

- Prefer functional components

- No any types without explicit comment

## Architecture

- All API calls go through /lib/api

- State management via Zustand

- Never modify package.json without asking

## Testing

- Write tests before implementation (TDD)

- Minimum 80% coverage for new code

- Use React Testing Library patternsWith Claude Code, you can completely replace the system prompt. This is useful for creating specialized agents, but it is a time investment that Codex does not require.

Failure Mode Analysis: When Things Go Wrong

Both tools fail. Understanding HOW they fail tells you which failure mode you can tolerate.

Codex Failure Patterns

- Variability: Same prompt produces different results across runs

- Off-plan drift: Ignores instructions when "in the zone"

- Defensive over-engineering: Adds unnecessary error handling

- Style ignorance: Doesn't adapt to codebase patterns

- Context switching: Loses track in complex multi-file edits

- Multi-agent CSV fan-out: No mid-batch error recovery, one failure can stall the pipeline

- Security: zsh sandbox bypass fixed in v0.106.0, but raises questions about sandbox trust model

Community Signal

A "Codex is rapidly degrading" thread on the OpenAI community forum has been gaining traction. Multiple users report declining output quality over the past month. Worth monitoring if you are evaluating Codex for a long-term workflow.

Claude Code Failure Patterns

- Over-interruption: Asks permission too frequently (mitigated by auto-accept mode)

- Context window issues: Compaction hits after 5-6 prompts

- Limit walls: Stops mid-task when hitting caps

- Eager gap-filling: Makes assumptions without flagging them

- Token bloat: Verbose explanations eat into limits

For context on Claude Code's real-world reliability: Rakuten reported 99.9% numerical accuracy on a 12.5M-line codebase. At that scale, even small failure rates compound. The gap between Codex and Claude on consistency is measurable in production.

"Codex sometimes flags plausible edge-case database query concurrency bugs that I have to manually verify for 30 minutes, only to conclude they're hallucinations." (HN commenter)

The Recovery Question

When Codex fails, you typically need to re-prompt from scratch. When Claude fails, you can often guide it back on track through conversation. This makes Claude failures feel more recoverable, even if they happen more often due to limit issues.

The Context Window Problem: The Hidden Battleground

This is where Codex has done something that feels almost magical. Multiple developers report that Codex's context window feels "infinite" compared to Claude Code.

| Aspect | GPT-5.3-Codex | Claude Opus 4.6 |

|---|---|---|

| Raw context window | 400K tokens | 1M tokens (beta) |

| Memory management | Diff-based forgetting (stale context diffed away) | Automatic summarization (infinite conversations) |

| Large file handling | Smooth up to 2000+ lines | Handles massive files with 1M context |

| Multi-agent context | Isolated per sandbox | Shared via team config + task list |

| Long session stability | Excellent | Improved with compaction, still degrades eventually |

The context window situation changed in early 2026. Claude's 1M token context (in beta) and automatic compaction for infinite-length conversations address the biggest complaint users had. Codex at 400K tokens is still generous, but Claude now has the raw context advantage. The trade-off: Claude's subagents each consume from your context/limit budget, while Codex's cloud sandboxes are more isolated. Codex's diff-based forgetting is a novel approach to memory management: instead of compacting old context into summaries, stale context is diffed away, keeping only the delta. This preserves more structural understanding of the codebase than summarization. For a deep dive on why context rot degrades agent performance and how context compression techniques like FlashCompact address it, see our analysis.

Where Codex Wins

Greenfield Projects

Starting from scratch? Codex excels at rapid scaffolding in cloud sandboxes. The Codex App lets you manage multiple greenfield tasks in separate threads.

Long Autonomous Sessions

Codex runs autonomously in cloud containers. You can steer mid-task without losing context,a new Feb 2026 feature. Claude's Agent Teams work in parallel but burn limits faster.

Budget-Conscious Teams

ChatGPT Plus ($20) still gets more sessions than Claude Pro ($20). The new $8 Go tier makes Codex accessible for even lighter usage.

Terminal-Heavy Workflows

GPT-5.3-Codex leads Terminal-Bench 2.0 at 77.3% (massive jump from 64%) vs Claude's 65.4%. If your workflow is terminal-native (DevOps, scripts, CLI tools), Codex is measurably better. Voice input via spacebar makes it even faster for terminal workflows.

Best For: Spec-Driven Workflows

If you write detailed specs and want to context-switch while the AI works, Codex is your tool. The new Codex App (macOS, launched Feb 2) makes this workflow first-class: organize tasks by project, run them in cloud sandboxes, check results when ready. GPT-5.3-Codex-Spark runs on Cerebras WSE-3 at 1,000+ tokens per second, 15x faster than the standard model. This is OpenAI's first production deployment away from Nvidia, which signals their seriousness about Codex as a standalone product.

Where Claude Code Wins

Coordinated Multi-Agent Refactoring

Claude's Agent Teams let you split a complex refactor across multiple sub-agents with dependency tracking. Each agent gets a dedicated context window,no pollution between tasks. This is Claude Code's strongest differentiator.

Massive Codebase Navigation

With 1M token context (beta) and 80.8% SWE-bench Verified, Claude handles large codebases better. Rakuten confirmed 99.9% accuracy on 12.5M lines.

Strict Plan Following

Need the AI to stick exactly to a spec? Claude is significantly better at instruction following. Codex often 'goes off plan' when it thinks it knows better.

Custom Automation via Hooks

Claude Code's hooks system lets you trigger actions on agent events,worktree creation, teammate idle, task completion. Build CI-like pipelines around your agent workflows.

Best For: Multi-Agent Orchestration

If you want to architect a solution and let a team of agents execute it in parallel, with dependency tracking, inter-agent messaging, and shared task lists, Claude Code's Agent Teams are unmatched. 16 Claude agents wrote a 100K-line C compiler in Rust that compiles the Linux kernel 6.9 (99% GCC torture test pass rate, ~$20K API cost). That is a proof point for agent teams handling genuinely complex engineering, not just CRUD scaffolding.

"For production coding, I create fairly strict plans. Codex goes off plan most of the time. Claude follows them.",HN commenter

Hybrid Workflow: Using Both

Power users figured this out early: these tools complement each other. The optimal workflow is not choosing one. It is knowing when to switch.

The Optimal Hybrid Flow

- Prototype with Codex: Fast iteration, explore multiple approaches

- Review with Claude: Code review, catch edge cases Codex missed

- Refactor with Claude: Complex architectural changes with deterministic outputs

- Final polish with Codex: Quick fixes and formatting

Power User Hybrid Workflow (Feb 2026)

# 1. Scaffold with Codex in cloud sandbox

$ codex "Implement user authentication with JWT, following patterns in /lib/auth"

# Runs in isolated container, 15-20 min autonomous

# 2. Orchestrate review + hardening with Claude Agent Teams

$ claude "Review the auth implementation. Spawn a security reviewer agent

and a test writer agent. Security reviewer checks for OWASP top 10.

Test writer creates integration tests. Block merge until both pass."

# Claude spawns 2 sub-agents with dedicated context windows

# Security agent finds 3 vulnerabilities, test agent writes 12 tests

# Both report back to the lead agent via messaging

# 3. Quick fix with Codex

$ codex "Fix these 3 security issues: [paste Claude's findings]"

# Done in 2 minutes, cloud sandbox, no context pollutionCross-Tool Review

Several developers report using Codex specifically to review Claude's work. "I use Codex for review tasks. When working on something complex, I frequently ask Codex to review Claude's work, and it does a good job catching mistakes."

Decision Framework: Pick Your Tool in 30 Seconds

| Your Situation | Best Choice | Why |

|---|---|---|

| Multi-agent orchestration | Claude Code | Agent Teams with task deps + messaging |

| Isolated sandbox execution | Codex | Cloud containers per task, no cross-pollination |

| Budget: $20/month | Codex | More sessions per dollar on ChatGPT Plus |

| Deterministic outputs | Claude Code | Same prompt = same result |

| Terminal-heavy workflows | Codex | 77.3% Terminal-Bench vs 65.4% Claude |

| Large codebase refactoring | Claude Code | 1M context + agent teams for divide-and-conquer |

| Greenfield project | Codex | Faster scaffolding in cloud sandbox |

| Custom automation | Claude Code | Hooks system for agent lifecycle events |

| Want open-source CLI | Codex | Apache-2.0, Rust-native |

| Max context window | Claude Code | 1M tokens (beta) vs 400K |

Frequently Asked Questions

Is Codex or Claude Code better for coding in 2026?

It depends on your workflow. GPT-5.3-Codex leads Terminal-Bench 2.0 at 77.3% and SWE-bench Pro (56.8% vs 55.4%), and excels at autonomous tasks in cloud sandboxes. Claude Opus 4.6 leads SWE-bench Verified at 80.8%. Claude Code now authors ~4% of all public GitHub commits, roughly 135K per day, projected to reach 20%+ by end of 2026 (SemiAnalysis). The biggest differentiator is subagent architecture: isolated execution (Codex) vs orchestrated sub-agents (Claude).

What are Claude Code Agent Teams?

Agent Teams (research preview, Feb 2026) let you spawn multiple sub-agents that each get a dedicated context window. They share a task list with dependency tracking and can message each other directly. Each agent works in a git worktree for isolation. This is one of the first lasting primitives in agent programming,a dedicated context window per task prevents the context pollution that degrades single-agent performance on complex work.

Which has better usage limits: Codex or Claude Code?

ChatGPT Plus ($20/mo) gives 30-150 messages per 5-hour window. Claude Pro ($20/mo) still hits limits faster on equivalent workloads. Both now offer overflow at API rates. Note that Agent Teams multiply limit consumption since each sub-agent uses its own context,plan accordingly.

Can I use both Codex and Claude Code together?

Yes, and the hybrid workflow is increasingly common. Use Codex for rapid prototyping and autonomous implementation in cloud sandboxes, then use Claude Code's Agent Teams for code review, security auditing, and complex refactoring with multiple coordinated agents.

Which is more open source?

Codex CLI is fully open-source under Apache-2.0, Rust-native, with 62,365 GitHub stars and 365 contributors. It has shipped 553 releases in 10 months (1.8/day average). Claude Code (71,500 stars, 51 contributors) ships more frequently with multiple releases per day but is a proprietary Anthropic product. Both tools' underlying models are proprietary.

WarpGrep v2 Boosts Any Coding Agent on SWE-bench Pro

WarpGrep v2 adds 2-3 points on SWE-bench Pro to every model tested: Opus 4.6 from 55.4% to 57.5%, Codex from 57.0% to 59.1%. It works as an MCP server inside Claude Code, Codex, Cursor, and any tool that supports MCP. Better search = better context = better code.

Sources

- SemiAnalysis: Claude Code is the Inflection Point (Feb 5, 2026)

- Anthropic Engineering: Building a C Compiler with Claude Agents

- GPT-5.3-Codex System Card

- Terminal-Bench 2.0 Leaderboard

- Scale AI SWE-Bench Pro Leaderboard

- Hacker News Discussion: Codex vs Claude Code (Dec 2025)

- Build.ms: Codex vs Claude Code (Today)

- Builder.io: Codex vs Claude Code Comparison

- Composio: Claude Code vs OpenAI Codex

- OpenAI Codex Pricing Documentation

- Northflank: Claude Code vs OpenAI Codex 2026

- Claude Code vs Cursor: Full Comparison